In the last few posts, I have reviewed how interference in the classic AB-AC task, transfer-appropriate processing, infantile amnesia, rapid forgetting, and prospective memory failure, can all be explained with only two concepts: memory maintenance failure, and memory search failure. I have also argued that all memory search failure results from two distinct causes: information is either inaccessible because the proper “search parameters” are not being used as cues for the memory system, or the search mechanism itself is suppressing certain “search results.”

So far, we have only discussed the first type of search failure, which I referred to as failures of "cue specification." What about this mysterious second type of failure, involving the computational architecture of memory, and sometimes even the

suppression of memory search results?

Contrary to popular belief, "suppression" is not merely a Freudian folk tale, but may have a real neurological basis. Memory suppression - also known as "

directed forgetting" - seems to be a capacity that we all may have to some extent, modulated by individual differences.

How might something like this occur in the brain? Consider the following: even if correct and precise cues are used to probe memory content, closely related items may be mistakenly retrieved first. These incorrect search results may be discarded, and memory will be probed again, perhaps with updated search cues. If incorrect items are retrieved again, this process will repeat; eventually, the target item still residing in memory may actually become suppressed because so many related items have been previously identified as incorrect.

The behavioral consequence of this iterative memory failure is what is known as the Tip-of-the-Tongue phenomenon, in which many fairly precise memory search cues are available (such as the number of syllables of the target item, the first or ending letters, and several semantically related concepts) but the target item itself is inaccessible (Fletcher & Henson, 2004). This is a perfect example of suppression-related memory search failure.

A related symptom of suppression-related memory search failure is known as retrieval induced forgetting. This robust effect has been succinctly characterized as when “remembering makes subjects forget related memories” (Levy & Anderson, 2002), and has been demonstrated with a variety of stimuli, including visual objects, word pairs, mock crime scenes, and personality traits. In this case, retrieval practice on related items impairs the retrieval of other previously learned items. For example, a witness who is asked to describe certain features of a crime scene will be significantly more likely to forget those features of the scene that were not asked about. This suppression-related failure of memory search roughly maps on to Schacter’s sin of blocking.

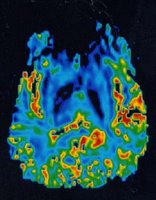

Both of these examples - retrieval-induced forgetting and Tip-of-the-Tongue - may result from a little-discussed feature of neural processing, the dark twin of the popular concept "spreading activation." This dark twin is known as priming-related reduction in neural activity.

This describes a situation in which subsequent presentations of a primed item will show the usual priming-induced facilitation of neural processing (in terms of reaction time, and other measures), but paradoxically a

reduction in neural activity. This may happen because the neural circuits representing these concepts essentially become "tuned" to produce this memory trace, as a result of small weight changes after the prime. This results both in faster reaction times and less overall activity.

How does this explain retrieval-induced forgetting and Tip-of-the-Tongue? Consider this: if an item has been repeatedly associatively primed through the neural processing of related information (as in tip-of-the-tongue or retrieval-induced forgetting), then the likelihood of producing these memory traces may be facilitated, while the overall amount of activity required to do so is decreased. Ultimately, this makes it highly unlikely that related items will become active, resulting in a short-term suppression effect.

Another computational characteristic of the memory search process that can sometimes result in memory search failure comes from the complementary learning systems perspective. This view holds that some brain regions are specialized for quick, one-shot learning of associations, while others are specialized for slow, context-invariant learning of underlying statistical regularities.

According to this framework, memories undergo a slow transition from one neural memory system to another, in which the initial learning of associations is accomplished by the hippocampus, and information from those memories is subsequently transitioned into neocortex over a longer period of time (McClelland, 2002; see

my presentation for

additional background). But if the hippocampus is damaged, as in amnesia, some recent memories will not have been successfully transitioned into neocortex. No matter how specific and precise the memory search cues are, the relevant information cannot be retrieved – simply because it is no longer there! This results in the well-known pattern of temporally graded retrograde amnesia in patients with medial temporal lobe damage. Furthermore, this consolidation process can be interrupted by ongoing neural activity (Wixted, 2004).

The final kind of memory failure is "monitoring failure," and will be covered in tomorrow's post. As we shall see, this simple concept can explain some of the most infamous failures of memory, including suggestibility, bias, source misattribution, and even confabulation.

Note: This post is part 4 (part 1, part 2, part 3) in a series of posts, in which I'll review and revise Schacter's "seven sins of memory" according to a new framework of memory failure, one that is both closer to neuroanatomy and wider in scope.References:

Fletcher, P., & Henson, R. N. A. (2004). Prefrontal cortex and long-term memory retrieval. In R. S. J. Frackowiak, K. J. Friston, C. D. Frith, R. J. Dolan, C. J. Price, S. Zeki, J. Ashburner & W. Penny (Eds.), Human Brain Function (2nd ed., pp. 499-514). London: Elsevier

Levy, B. J., & Anderson, M. C. (2002). Inhibitory processes and the control of memory retrieval. Trends in Cognitive Sciences, 6, 299-305.

McClelland, J. L., McNaughton, B. L., & O'Reilly, R. C. (2002). Why there are complementary learning systems in the hippocampus and neocortex: Insights from the successes and failures of connectionist models of learning and memory. In T. A. Polk & C. M. Seifert (Eds.), Cognitive modeling. (pp. 499-534): MIT Press, Cambridge, MA, US

Wixted, J. T., & Stretch, V. (2004). In defense of the signal detection interpretation of remember/know judgments. Psychon Bull Rev, 11, 616-641.

Related Posts:

The Seven Sins of Memory (Part 1)

The Transience of Memory (Part 2)

Lost Keys: Memory Search Failure (Part 3)

Several previous posts cover the role of specific frequencies of neural oscillations, in everything from anticipation to face processing. I have also mentioned a neural network model of short-term memory in which multiplexed gamma and theta oscillations give rise to memory capacity limits. A fascinating paper by Burle and Bonnet in Cognitive Brain Research delves into the implications of this "serial oscillation" account by using the Sternberg task in conjunction with the frequency following response.

Several previous posts cover the role of specific frequencies of neural oscillations, in everything from anticipation to face processing. I have also mentioned a neural network model of short-term memory in which multiplexed gamma and theta oscillations give rise to memory capacity limits. A fascinating paper by Burle and Bonnet in Cognitive Brain Research delves into the implications of this "serial oscillation" account by using the Sternberg task in conjunction with the frequency following response.