Based on the variety of evidence presented

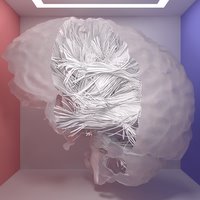

in previous posts, how many memory systems are required to comprehensively explain the existing data on human memory? The idea that there is a prefrontal short-term working memory system is uncontroversial; for example, this system is unimpaired in the famous case of Clive Wearing, who maintains that he has become conscious for the first time roughly every 10 minutes. This patient is capable of carrying on a conversation and clearly manifests the ability to maintain goals and other signs of intelligence.

Converging evidence comes from fMRI studies of dorso-lateral prefrontal cortex (dlPFC), in which dlPFC shows sustained activity throughout continuous performance tasks that appears not to be due to general mental effort or concentration (Cohen, et al., 1997). Instead, active firing in this region may serve to maintain activity in more posterior regions, which represent specific information relevant to the current task. Therefore, working memory qualifies as a system, according to the definition established earlier, because it has unique computational requirements (to maintain active firing) as well as unique functional characteristics, in that it is responsible for maintaining current goals and information relevant to those goals.

It is also clear that a second memory system exists, one that is subserved by structures in the medial temporal lobe and is specifically involved in the longer-term storage of information. Specifically, the entorhinal, perirhinal, and parahippocampal cortices, as well as the hippocampus itself, make up this second system, which is required for the relatively quick learning of new associations. The specific type of association to be learned determines which of these structures is most critical, with context-rich (episodic) memories requiring the entire complex, and relatively context-free (semantic) memories relying more on the surrounding non-hippocampal MTL structures.

As described above, it is not necessary to propose distinct systems for familiarity and recollection within this long-term memory system, because a single process “signal detection” model can account for both the neuropsychological and imaging results. Likewise, it is not necessary to propose distinct systems for semantic and episodic memory within this long-term memory system, simply because these memory types rely differentially on these MTL structures. According to this view, the hippocampal complex is a unique memory system because it has unique computational requirements (the capacity to represent information sparsely; McClelland, et al., 2002) and also has unique functional characteristics, in that destruction of this region results in profound failures to long-term explicit memory (as in amnesia).

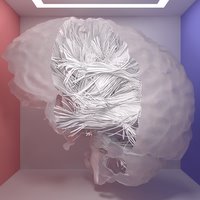

Finally, I propose a third general-purpose memory system, on which both of the previous systems rely. This system is actually a conglomerate of many brain regions, each of which is responsible for the processing of information relevant to specific modalities (vision, hearing, speech, etc). This is the memory system ultimately responsible for the long-term storage of semantic and episodic memories, after they have undergone a process of memory consolidation. During this consolidation process, the hippocampus and related structures slowly “farm out” the information they represent to the regions of neocortex that are relative to each characteristic of the to-be-stored memory, through a process analogous to interleaved training in artificial neural network models (McClelland et al., 2002). In this way, the long-term memory system relies on the general-purpose system during consolidation.

Likewise, the working memory system also relies on this general-purpose memory system; for example, in a delayed match to sample task, correct matching behavior relies both on the maintenance of the target item and the representation of the current item (Miller et al., 1996). Because each different item is represented by a different pattern of inferotemporal (IT) activity, and because identical items are represented by the same patterns of IT activity, the degree of match between prefrontal representations maintained and each item’s IT representation can index whether an item is a match or nonmatch. Evidence for this process comes from the phenomenon of “match enhancement” observed in the firing rates of PFC and IT cells in the case of a match.

This three-system framework for memory provides a much clearer view of human memory than the more traditional distinctions examined above. First, the general-purpose neocortical system is responsible for perceptual priming effects and other aspects of implicit memory, whereas the other two systems are crucial for the formation of explicit memory. Second, the hippocampal formation subserves recollection, familiarity, and episodic memory. Third, the medial and dorsolateral regions of prefrontal cortex are involved in the accessing and online manipulation of information from either of the two other systems.

This three-system view also has the advantage of explaining additional phenomena that do not clearly correspond to the distinctions examined above. For example, evidence from early studies of memory seemed to indicate that better retention occurs when items were processed at a more semantic as opposed to perceptual level. A revised view of this phenomenon, known as transfer-appropriate processing, suggests that strength of memory is more influenced by the degree of match between study and test (Morris et al., 1977) than some inherent superiority of semantic processing to more perceptually-based processing. According to this three-system view, results supporting transfer-appropriate processing emerge from an interaction between all three systems, in which the prefrontal system is actively maintaining information in task-relevant parts of the neocortex, while the hippocampal formation is essentially taking “snapshots” of this neocortical activity on a global scale. At test, retrieval cues that more closely elicit the pattern of activity present in neocortex during study will be more effective at eliciting the relevant memory trace from hippocampus, thus resulting in a benefit for test conditions that are compatible with study conditions.

Finally, the three-system view also provides a parsimonious explanation of various consolidation phenomena. Again, only the working memory system and the long-term memory system are sufficient for rapid encoding of arbitrary information; the neocortical memory system requires much slower learning. This provides a natural role for sleep as a means for memory consolidation, during which prefrontal activity is minimized, and the hippocampus is involved in slowly interleaving new memories with preexisting representations in more posterior neocortical areas. Indeed, this pattern of activity is fairly characteristic of sleep.

In summary, many of the traditional distinctions made between memory systems can be clarified by using a computational and function definition of “system,” instead of using operational methods. This allows for several memory phenomena to be understood in terms of three memory systems: a hippocampal system responsible for the rapid encoding of experiences and associations into a consolidated format in neocortex, a prefrontal system responsible for the active maintenance of goals and goal-relevant information by interacting with neocortex, and a general-purpose neocortical system which supports both perceptual processing and implicit memory, such as priming. Together, these three systems can also account for phenomena that were not clearly addressed by previously defined memory systems, such as consolidation and transfer appropriate processing.

References:Cohen, J. D., Perlstein, W. M., Braver, T. S., Nystrom, L. E., Noll, D. C., Jonides, J., et al. (1997). Temporal dynamics of brain activation during a working memory task. Nature, 386, 604-608.

McClelland, J. L., McNaughton, B. L., & O'Reilly, R. C. (2002). Why there are complementary learning systems in the hippocampus and neocortex: Insights from the successes and failures of connectionist models of learning and memory. In T. A. Polk & C. M. Seifert (Eds.), Cognitive modeling. (pp. 499-534): MIT Press, Cambridge, MA, US

Miller, E. K., Erickson, C. A., & Desimone, R. (1996). Neural mechanisms of visual working memory in prefrontal cortex of the macaque. J Neurosci, 16, 5154-5167

Morris, C. D., Bransford, J. D., & Franks, J. J. (1977). Levels of processing versus transfer-appropriate processing. Journal of Verbal Learning and Verbal Behavior, 16, 519-533