Learning Like a Child

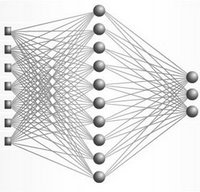

There's a fascinating post over at MindPixel about Elman's neural network modeling of cognitive development, in which he argues that maturational increases in working memory span may provide computational advantages not realized by neural networks that always had span of mature size. Proof comes from computational models of language, in which Elman attempts to recreate the mechanism behind so-called "critical periods" (or "sensitive periods" as qualified by Steven Rose) using simple recurrent networks.

There's a fascinating post over at MindPixel about Elman's neural network modeling of cognitive development, in which he argues that maturational increases in working memory span may provide computational advantages not realized by neural networks that always had span of mature size. Proof comes from computational models of language, in which Elman attempts to recreate the mechanism behind so-called "critical periods" (or "sensitive periods" as qualified by Steven Rose) using simple recurrent networks.First, Elman begins with staged input: he discretely increases the size of the training corpus, which consists of roughly 25,000 sentences from an artificial generative grammar (including verbs, nouns, prepositions, relative clauses, and various rules of agreement between them). This grammar permits utterances of the type "boys who chase dogs see girls," "girl who boys who feed cats walk," "cats chase dogs," "mary feeds john," and "dogs see boys who cats who mary feeds chase." Though clearly not as complicated as real English, it is constrained enough that incorrect formulations would be likely if the network were performing at chance.

Only the networks trained in a discretized, staged way - a five-stage process of gradual change in the training corpus from mostly simple utterances to mostly complex ones - were able to correctly predict novel grammatically-correct utterances. Remember that these networks are never told the grammatical rules, but learn them autonomously, just as toddlers do. As Elman puts it, "this is a pleasing result, because the behavior of the network partially resembles that of children. Children do not begin by mastering the adult language in all its complexity. Rather, they begin with the simplest of structures, and build incrementally until they achieve the adult language." Essentially, Elman gave his network carefully designed grammar lessons in some non-existent language.

Yet there is a problem with this training regime: real children are not exposed only to some clearly-defined, age-appropriate level of linguistic complexity, but instead are faced with a much more diverse mix of language. To address this problem of realism, Elman then builds a network in which the training corpus remains relatively constant, but memory increases through discretized stages, modeled as changes in the amount of recurrent backpropagated feedback. Amazingly, this network performed much like the previous, which had complete feedback available to it from the start. In other words, limitations on the network actually caused better performance than expanding the network's capacity!

This work has fasincating implications for approaches to development, both natural and artificial: If capacity increases are gradual, as physiology would suggest, what causes the clearly-defined sensitive periods of development? What environmental or internal conditions have evolved to precipitate increases in memory capacity? Can we induce these changes artificially? What happens to learning when increases in corpus complexity are synchronized with increases in memory capacity, and what does this say about our institutionalized system of "staged input", a.k.a. public education?

And the strangest question of all: could some developmentally-delayed children actually be better off than their peers? Some anecdotal evidence might suggest so: Albert Einstein, after all, was four years old before he could speak and seven before he could read.

1 Comments:

I just discovered that much of this study is currently in doubt, because of a study published by this paper: Rohde, D.L.T. & Plaut, D.C. (1999). Language acquisition in the absence of explicit negative evidence: How important is starting small? Cognition, 72, 67-109

These authors were not capable of reproducing the findings.

Post a Comment

<< Home