Emotional Robotics

It may sound like science fiction, but a team lead by David Bell from Queen's University is using emotions to guide robotic behavior. Their robot responds to new objects with a cascade of feelings; initial fearfulness gives way to caution and inquisitiveness. After a certain amount of observation, the robot will decide whether those new objects should be avoided or approached, and whether they can be categorized as instances of old objects or as entirely new ones.

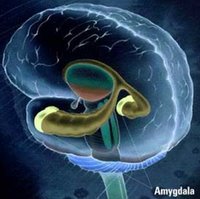

It may sound like science fiction, but a team lead by David Bell from Queen's University is using emotions to guide robotic behavior. Their robot responds to new objects with a cascade of feelings; initial fearfulness gives way to caution and inquisitiveness. After a certain amount of observation, the robot will decide whether those new objects should be avoided or approached, and whether they can be categorized as instances of old objects or as entirely new ones. Antonio Damasio, among others, has long held that emotions are a critical part of intelligence. According to this view, emotions and feelings are the "immune system" of the brain; they interface our internal worlds with the external world, and guide us towards responding adaptively to it. Emotions motivate many of our responses to external stimuli; they are easily conditioned and thereby altered with experience; and the experiences that elicit strong emotions are likely to affect our behavior for quite some time.

But these researchers had a dual focus. Not only did they emphasize the role of emotions, but also the importance of childlike cognition - of which heightened emotions are certainly a part. As David Bell put it, "A system that can observe events in an unknown scenario, learn and participate as a child would is a major challenge in AI. We have not achieved this, but we think we've made a small advance." No tantrums, I hope.

For this small advance his team has won the British Computer Society's 2005 Machine Intelligence Prize. The robot, named "IFOMIND" is based on the Khepera platform. The BBC is also running a short article on the team.

This work builds on earlier advances in neural network and neurorobotic modeling of the mammalian dopamine system, also using the Khepera system. Olaf Sporns and Will Alexander created a robot capable of navigating through an environment, avoiding obstacles, and gripping objects with a moveable arm. Sensory inputs include color vision and "taste," defined as the conductivity of the objects it encounters. The robot's rate-coded neural network used layers based on real mamallian neural structures, and was provided with artificial "dopamine" fluctuations depending on the rewards it encountered, in which objects with lower conductivity were more rewarding. After exploring its environment, the robot learned to stay within the areas of highest reward density. This behavior was never explicitly programmed, but developed autonomously as a result of the interaction between environment and (intelligent?) agent.

One might claim that the relationship between these "artificial dopamine" fluctuations and real, human emotion is purely metaphorical, and that the analogy is really just an extreme case of anthropomorphism. As Dylan Evans points out in his article on emotional robotics, however, the same thing was once thought of animals: "Descartes, for example, claimed that animals did not really have feelings just like us because they were just complex machines, without a soul. When they screamed in apparent pain, they were just following the dictates of their inner mechanism. Now that we know the pain mechanism in humans is not much different from that of other animals, the Cartesian distinction between sentient humans and 'machine-like' animals does not make much sense." The same might now be said of the distinction between sentient humans and 'human-like' robots; are we making an artificial distinction between the emotional circuits of the human brain, and simpler versions of it in silico?

0 Comments:

Post a Comment

<< Home